Launching Resolve AI Labs backed by new $40M Series A Extension

Almost a decade ago, the “Forward Deployed Engineer (FDE)” was born at Palantir. Shyam Sankar, now CTO, described the role as one that "absorbs pain and excretes product", turning frontline chaos into shipped software.

The FDE has since been rebranded across the industry as the Agent Engineer, AI Engineer, or Customer Engineer. But the underlying function is more important now than it has ever been. As AI agents become mainstream in core enterprise workflows, forward deployed engineering is slowly becoming an inevitable path.

Here is why, and what it takes to get it right.

For most of the SaaS era, enterprise software was deterministic. You bought a system of record, followed the installation steps, and the software became a fixed layer in your stack. It was tedious to set up, but once it worked, it worked. Tribal knowledge formed around it: runbooks, cookbooks, Confluence pages. An operational muscle memory built around something stable.

Selling was structured the same way. An account team → a DRI → a few quarters of implementation → a predictable path to go live.

AI agents broke that model, but not in the way people usually describe it. The systems of record like Salesforce, HubSpot, and Splunk still exist. The difference is what you're deploying on top of them.

Instead of a deterministic layer that executes defined logic, you're deploying something probabilistic. Something designed to do the job a human would otherwise do. And to do that job well, an AI agent has to internalize more than the system of record. It has to internalize the messy, undocumented decision trace that actually makes the company function. The institutional memory, the edge cases, or the judgment calls that nobody wrote down because the person who made them didn't know they were making them.

This is where the difficulty lives, and it's compounded when the problem domain is already complex. Deploying AI to generate a first draft of a support ticket is relatively forgiving. Deploying AI to investigate a production incident across a distributed system with six teams and fifteen services is not. The agent needs to understand not just the tools, but the relationships between them. Not just the current state, but how it got there.

That is the kind of deployment where Forward Deployed Engineering isn't optional. It's the product.

The FDE’s job is to metabolize the customer’s world, and to build deep domain intuition about their infrastructure, environment, and edge cases - and horizontalize that back into the product roadmap.

“In many ways, the FDE is the highest-fidelity product signal in the company.”

In enterprise AI, the speed at which Forward Deployed Engineering pain becomes product capability determines whether you build a platform company or a services company.

The immediate pushback on this FDE model is scale. If every FDE has to deeply understand each customer's environment, what happens at 500 customers?

“The answer starts with reframing what FDEs are actually doing. They aren't support. The FDE is the product's most demanding user, operating at the edge of what it can handle. Their frustrations are data. Their workarounds are roadmap items. And their most important job is to make sure that what they learn in the field doesn't stay in the field.”

In practice, this means FDEs don't just report what's broken. They author high-quality evals that translate real-world scenarios into structured benchmarks engineering can improve against. These evals are customer-specific, drawing on environment-specific context the core engineering team wouldn't otherwise have access to. They reflect the true complexity of each customer's domain. And if the product is genuinely compounding, the frontier moves outward over time. The evals FDEs create should get harder, not easier, because the product is absorbing the complexity that used to require a human.

“A simple diagnostic: what fraction of your FDE output is net-new feedback versus the same issues resurfacing? If the team cycles through the same edge cases every quarter, you have a product absorption problem. But if FDEs are consistently operating at the boundary of what's technically possible, that's how services convert into product equity. That's how you build a moat that compounds.”

The FDE's job, in this sense, is compression. Take the complexity of the real world, translate it into something the product can absorb, and progressively shrink the gap between what requires a human and what doesn't.

The FDE lifecycle is a useful diagnostic for where a company sits and what it needs to do next. The phases aren't rigid, but the progression is real.

Phase 1: Engineering-Supported Growth

At inception, engineers work hand-in-hand with every customer. The product is too raw for a formal FDE function. The company itself, from the CTO down, is the forward deployment layer. Early adopters won't expect the product to work out of the box. Significant manual effort is assumed, sometimes even deliberate overfitting to a specific customer's environment.

The moat in this phase lives in complexity. If the problem is genuinely messy and hard, it's worth solving. If the gap between human effort and product capability isn't meaningfully wide, it's often a sign there isn't a clear vision for a concrete, valuable problem yet.

This dynamic also explains why early AI tools with low integration requirements often struggled to retain customers. Low integration friction cuts both ways. What's easy to integrate is easy to replace. Products that required no forward deployment at launch often had no durable moat to show for it.

Phase 2: FDE-Supported Growth

The product can now reliably deliver value, but only when tailored to the constraints of each enterprise. Some design partner accounts are turning into large-scale production deployments. The product is beginning to ingrain itself into daily workflows.

With FDEs absorbing customer-specific complexity and translating it into evals, the core engineering team can focus on platform-level problems rather than firefighting bespoke deployments.

The turning point in this phase comes when FDEs can support more accounts simultaneously. Work that was once custom gets baked into the product through successive releases. Product capability starts to exceed the manual effort required to onboard, and you begin to see early signs of true product maturity. Lower-complexity environments require less FDE involvement, while high-complexity enterprises still justify deep forward deployment.

This is where I sit at Resolve AI. The problem we're solving, giving every engineer the ability to investigate across code, infrastructure, telemetry, and organizational knowledge simultaneously, is inherently complex. Every customer's stack is different. The services, the ownership models, the alert topology, the tribal knowledge about how things fail. There is no shortcut to understanding it.

What that means in practice is that every engagement teaches us something that becomes part of how Resolve AI understands production systems. When a customer corrects an investigation path, or explains why their Redis cluster behaves differently than expected, that correction becomes part of the system's knowledge. The FDE's job is to make sure that learning doesn't stay local. It has to get back into the product.

Part of my role involves actively PM'ing a sprint team sourced from FDE feedback, forcing a direct confrontation with the highest-friction gaps every month. Watching that process work, and seeing field learning turn into product capability, is what makes forward deployment worth doing at this stage.

Phase 3: Product-Supported Growth

The final phase is what the whole lifecycle is building toward. The product works seamlessly enough that onboarding is nearly frictionless, and the platform reliably handles enterprise complexity out of the box.

Most customers can deploy without an FDE in the loop. Deals close with minimal account team involvement. FDEs are reserved for the highest-complexity, highest-stakes enterprises, where they're extending a strong platform rather than compensating for gaps.

FDEs then can earn the right to solve more important problems for the company they are embedded in. The product now handles low-lift environments out of the box, while still scaling into the most demanding integrations

From here, the challenge shifts to reinvention. Expanding into new workflows, restarting the cycle, making sure forward deployment continues to convert frontier complexity into durable product advantage rather than coasting on what's already been absorbed.

At the end of this lifecycle, if the gap between product maturity and human involvement hasn't meaningfully widened, you've effectively become a services company. That usually means product execution fell short, or that there was a persistent disconnect between what FDEs were experiencing in the field and what was actually getting prioritized.

But if that gap has grown, it means the product has absorbed the complexity and now sits on the mission-critical path of large enterprises. That's the outcome worth building toward.

The best FDEs think in first principles, build durable primitives, and compound learnings across customers. The best companies treat their FDE team as an extension of engineering, not an extension of sales, because that's what they actually are.

One of the reasons I joined Resolve AI at this stage was that the line between field learning and product capability is direct and short. The problem is hard enough that what I learn in each engagement genuinely matters to what gets built. That's a rare thing, and it's worth seeking out. I’ve been lucky enough to witness this as we move along the lifecycle, and help build the function out from scratch alongside a super talented team at Resolve AI, and if this resonates, I’d love to chat more!

Hear AI strategies and approaches from engineering leaders at FinServ companies including Affirm, MSCI, and SoFi.

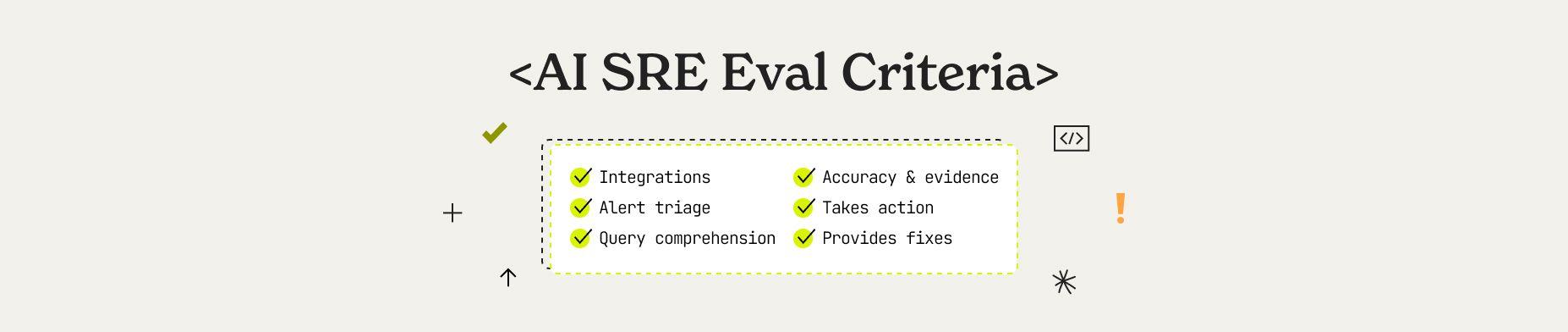

Learn how to evaluate an AI SRE to ensure they run in your unique production environments. This guide explains the five agentic pillars, six key evaluation dimensions, and enterprise readiness criteria that separate production-ready AI SRE from experiments.

100 Engineering software engineering executives joined Resolve AI and other luminary leaders to discuss the accelerated evolution of agentic AI in software engineering from coding to managing production systems.