Launching Resolve AI Labs backed by new $40M Series A Extension

Today, we're introducing Resolve AI Labs. AI made building software faster. We're here to do the same for running it.

Engineers are on call for systems they didn't write. More and more of that code isn't being written by a human at all. The environments keep growing, the dependencies more tangled, and nobody has a clean mental model of any of it. The ceiling on engineering productivity was never writing code. It was always operating what gets written. Building AI production agents with the accuracy, reliability, and control that enterprises demand requires foundations that don't exist yet.

We started Resolve AI Labs with a clear mission: the next frontier is operating software and managing it at scale. Our goal is to enable AI systems that safely and reliably operate the world's production software, freeing engineers to innovate more.

Frontier models, pointed at infrastructure, get you surprisingly far. And then they hit a wall.

Diagnosing an incident isn't a question you ask a model. It's an investigation. You form hypotheses across logs, metrics, traces, and topology. You revise them as evidence shows up. You have to know when you're confident enough to act, and when you're not. All of this under time pressure, with noisy and incomplete data, where getting it wrong means real downtime and real customer impact. That kind of reasoning doesn't emerge from prompting a frontier model. It has to be trained.

At Resolve AI Labs, we're developing AI systems that can reason about production infrastructure the way the best engineers do, and over time, across more context than any team of engineers can hold in their heads. As a first step, this means domain-specific models for causal reasoning, our own verifier models for evaluating the quality of open-ended investigations, and orchestration frameworks that coordinate multiple agents across fragmented evidence in real time. In production, there's rarely a clean "right answer" to train against. Engineers disagree on the root cause. Postmortems get revised months later. The reward signal itself is an open research problem.

The data problem is just as hard. Production incidents are rare by definition. The ones most worth learning from happen the least often. Their telemetry is ephemeral: logs rotate, metrics retention windows close. The exact conditions of an incident from six weeks ago are, in most cases, gone. Furthermore, the data is sensitive, and it often can't leave a customer's environment. You can't brute-force this with scale. So we're building simulation and replay infrastructure that lets us train and evaluate durably, independent of which data still exists.

These aren't engineering problems you can solve with a better wrapper. They're open research problems. Our founding team comes from Meta Superintelligence Labs and Google DeepMind, alongside engineers with deep expertise in observability and infrastructure. We need both.

The goal is a fundamentally different operating model for production software. An AI system that can investigate, reason, and act across the full production environment, continuously, without waiting for a human to step in.

That means agents are increasingly taking point to handle the operational load currently falling on engineers by default: the 3 am pages, the multi-hour investigations, the context switching that makes it hard to build anything new. The best engineers should be setting policy, handling true exceptions, and building forward, not keeping up.

We are moving from Human-in-the-Loop to Human-on-the-Loop, and Resolve AI Labs is how we get there.

Resolve AI Labs is hiring researchers and engineers working on complex reasoning, long-horizon multi-agent interactions, RL over massive environments, and ambiguous evaluations. If these problems interest you, we'd like to talk.

Hear AI strategies and approaches from engineering leaders at FinServ companies including Affirm, MSCI, and SoFi.

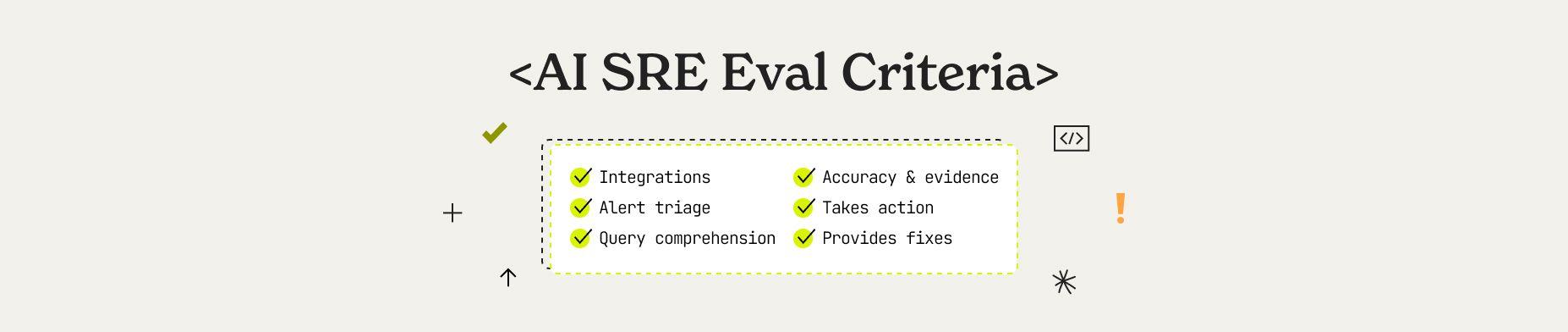

Learn how to evaluate an AI SRE to ensure they run in your unique production environments. This guide explains the five agentic pillars, six key evaluation dimensions, and enterprise readiness criteria that separate production-ready AI SRE from experiments.

100 Engineering software engineering executives joined Resolve AI and other luminary leaders to discuss the accelerated evolution of agentic AI in software engineering from coding to managing production systems.