Get an exclusive look at how we 2x'd agent accuracy with Agent Teams

With vibe coding, turning intent into product has never been easier or faster. AI coding assistants and tools like Replit have made prototyping faster than ever, but writing code is only one part of a software developer's job. We cannot move faster until we also accelerate the operational side of software development, which is getting harder as more AI-generated code ships into production.

Incidents are stressful and slow developers down. But the real killer to velocity is the constant stream of interruptions and ambiguous questions about production: "Why are pages rendering slow today?" or "Is this performance dip related to the feature flag I just enabled?" or "What's causing the latency to suddenly spike?" or "Which of the code changes that just landed is consuming the error budget?"

Answering these questions means going dashboard by dashboard, digging through recent changes, diving into unfamiliar codebases, and shoulder-tapping colleagues. These daily interruptions are the true "death by a thousand cuts" preventing effortless software development.

Vibe debugging is the process of using AI to investigate any software issue, from understanding code to troubleshooting the daily incidents that disrupt your flow. In a natural language conversation, an AI-powered agent translates your intent (whether a vague question or a specific hypothesis) into the necessary tool calls, analyzes the resulting data, and delivers a synthesized answer.

Vibe debugging is a new paradigm in software engineering that collapses the entire investigative loop, from forming a hypothesis to validating it with evidence, into a fluid conversation with artificial intelligence.

Think about what traditional coding workflows actually demand. Coders investigate issues one hypothesis at a time: pull up a dashboard, check a log, switch frameworks, repeat. Every step is manual, serial, and expensive. Large language models and agentic AI have created a smarter path.

In vibe debugging, AI acts as a trusted partner between you and your production system, using deep context to handle the tactical, time-consuming tasks of investigation. Instead of pursuing one path at a time, it explores all relevant hypotheses in parallel, simultaneously gathering evidence from code, querying live telemetry, cross-referencing deployment history, and surfacing insights from past incidents. Without waiting for the next instruction, it proactively drives the investigation, highlighting correlations you might not have thought to look for.

Let's look at a real-world example where Resolve AI was used to investigate a failed deployment of a service (svc-analysis).

It went from telemetry all the way to an actionable fix in a single conversation:

When I asked why the deployment failed:

node-canvas error as the root cause..github/src/base/node-prod/Dockerfile) and line numbers to apply the fix.The result is that the complex, multi-threaded investigative loop (hypothesis->evidence->validation) between a question and an answer, is abstracted into a single threaded conversation with Resolve AI. This radically reduced the time to investigate and I didn’t need to be an expert in Node.js native dependencies or specific Docker image structure. Resolve AI acted as their expert for all of it.

Vibe Debugging with agents like Resolve AI make debugging as natural as asking a question to one of your very experienced teammates: someone who knows your production systems intimately, helps you reason through possibilities, and gathers relevant evidence without requiring perfect knowledge about the underlying system, but without the downside of having to interrupt them.

Vibe debugging changes how engineers interact with their systems at every stage of an investigation. Here's how it plays out across a real-world use case with Resolve AI:

It meets you where you are, even if your starting point is a human question, an automated alert, or something as open-ended as "Can you check why the UI has been slow in the last 2 hours?"

In this case, Steven uses an alert from Grafana as a starting point. He initiates a conversation to challenge the alert's premise and build his own hypothesis:

Resolve AI returns a synthesized analysis in real-time, confirming the issue is not widespread and that CPU is normal. Steven instantly discards the initial theory and forms a more specific one based on evidence. A machine-generated alert becomes an evidence-based hypothesis, set through a natural language conversation.

To answer a single question manually, an engineer begins a serial investigation: find deployment history, read through commits, switch to a metrics tool, look for memory spikes, try to correlate timestamps. These are tedious workflows that every team knows well.

Resolve AI investigates multiple hypotheses in parallel, simultaneously querying:

svc-entity-graph.svc-entity-graph-ingest) for correlated network traffic anomalies.It understood the request, simultaneously querying deployment systems, parsing Git history and feature flag changes, analyzing historical memory metrics, and cross-referencing all the timestamps. Resolve AI collapsed what would have taken a lot of time in manual, sequential work into a single action.

Vibe Debugging abstracts away the complex, multi-tool investigation that engineers are typically forced to do manually. Let's look at the example where Iain asks Resolve AI if recent changes to svc-entity-graph led to increased memory usage.

While Iain asked a single question in plain English, Resolve AI performed a multi-step evidence-gathering process in the background, as shown in its work log:

Screenshot below reveals what was truly abstracted away from the user. Behind the scenes, Resolve AI began by orienting itself, identifying the correct resource (svc-entity-graph as a kube_deployment in prod) and setting the precise time range for the investigation.

From there, it initiated a parallel investigation, automatically querying completely different systems:

1 recent commit.13 different charts to pinpoint the 2 that were most relevant to a memory issue.Finally, it confirms the entire theory by tracking the reversion of that same change in your CI/CD pipeline and linking it to the system's immediate stabilization.

Iain never had to open a single one of these tabs. He was completely abstracted from the underlying tools and their specific query languages. Vibe Debugging with Resolve AI abstracts all of this away, acting as the translator to convert his question into dozens of underlying queries against the right systems.

Vibe Debugging requires your operational history as a queryable resource to investigate issues that are not just happening in the present moment. For example, in the investigation below Steven starts asking a question that is explicitly comparative and historical:

Resolve AI performs a complex, time-based analysis. As it states in its plan, Resolve AI updates its time range to "cover both the recent period and the timeframe from 3 hours ago so we can compare".

Resolve AI then builds a multi-hour narrative that contrasts the two periods. It identifies that the severe "OOM (out-of-memory) events" were happening earlier but have now ceased, while a more gradual "possible memory leak" persists. This ability to perform a comparative analysis over custom time windows is critical for understanding regressions, chronic issues, and the impact of changes. A traditional chatbot with a static knowledge base would struggle with such analyses.

Resolve AI doesn't just return a list of deployments and a chart of memory usage. It synthesizes these disparate data points into a single, actionable narrative. After its initial investigation, Resolve AI presented its findings, culminating in a conclusion:

This conclusion "The timing and nature of the rollout directly correlates with the memory surge" has synthesized evidence from deployment history, configuration changes, and monitoring data into a single, confident narrative.

This leads to the second act of synthesis: Resolve AI now synthesizes operational knowledge. It provides a detailed, safe procedure for disabling the flags, complete with best practices ("coordinate with your team," "use a low-traffic period") and a pre-planned Rollback Plan.

This is the essence of Vibe Debugging. First, Resolve AI synthesizes data into a clear diagnosis, and then, based on human direction, it synthesizes knowledge into a safe resolution plan. It partners with the engineer through the entire lifecycle of the problem.

The entire interaction from Iain and Steven, from the complex initial question to the follow-up about how to safely disable the flags, happened as conversations on their phones. This natural language interface is the key that unlocks all the underlying power. It makes a deep, historical, multi-system investigation as simple as having a conversation, empowering any engineer to diagnose issues that would have previously required a select group of domain experts.

The rise of generative AI has made the first half of the engineering lifecycle nearly frictionless. Tools like ChatGPT, OpenAI's APIs, and GitHub Copilot have turned vibe coding into a default mode for building. Startups are prototyping entire products in days. Developers use AI to generate working code across programming languages they've never written in, iterate rapidly, and ship features faster than traditional coding ever allowed.

But that fluency with vibe coding has also exposed a painful imbalance.

The faster we write code, the more painfully apparent the friction becomes on the other side of the loop. What good is writing a feature in five minutes if it takes five hours to understand why it's failing in staging? The problem is that AI-generated code often trades maintainability and security vulnerabilities for speed. It can produce functional code that ships fast but introduces technical debt, incomplete refactoring, fragile dependencies, logic that works until it doesn't. Without a proper code review process or production-ready validation, that debt accumulates silently until something breaks.

Andrej Karpathy, who coined the term vibe coding, framed it simply: you describe what you want, an LLM writes it, and you iterate until it works. The catch is that LLMs are optimizing for working code that satisfies the prompt, not for long-term functionality, observability, or the ability of experienced developers to reason about it later. The same is true across frameworks, whether you're using Replit for a quick prototype or building production Python with a more sophisticated coding tool.

This is where a holistic AI strategy becomes critical. To use AI effectively across the full software development lifecycle, you need a counterbalance, AI-powered intelligence that makes the production side as fluid as the code generation side. Vibe coding handles the build. Vibe debugging handles what comes after.

Systemically, it provides the safety net to move at the speed of vibe coding. With Agentic AI like Resolve AI, capable of understanding context, reasoning through production systems, and learning from every interaction, the time and cognitive load of every debugging scenario plummets. Feedback loops that used to take hours compress into minutes.

When the process of working through production issues is no longer slow, isolating, and adversarial, the fear of breaking things diminishes. The focus shifts from "who caused the problem?" to "how quickly can we understand and solve it together?" The academic blameless postmortem evolves into a collaborative investigation. Here's a quick example of how that can even be fun.

Resolve AI is acting as a knowledgeable, culturally aware participant. The simple act of delegating a question to AI becomes a safe, impartial move, turning a moment of blame into a moment of collective, dark humor.

The incident lifecycle doesn't end when the problem is solved. The request for a poem for "our savior justin" is about closing the emotional loop. That's the real answer to why vibe debugging matters. You get more than a smart tool. You get a cultural catalyst, an environment where fixing production issues becomes a collaborative, sometimes even fun, learning session.

Resolve AI is building AI agents for software engineering founded by the co-creators of OpenTelemetry.

Resolve AI understands your production environments, reasons like your seasoned engineers, and learns from every interaction to give your teams decisive control over on-call incidents with autonomous investigations and clear resolution guidance. Resolve AI also helps you ship quality code faster and improve reliability by revealing hidden system context and operational behaviors.

With Resolve AI, customers like DataStax, Tubi, and Rappi, have increased engineering velocity and systems reliability by putting machines on-call for humans and letting engineers just code.

Join our engineering leads for "Behind the Build", a webinar series deep-dive into how we built agents that run software.

Steven Karis

Chief Architect & Founding Engineer

Steven is a founding engineer at Resolve AI. He is focused on building the agentic AI systems that powers Resolve's AI Production Engineer. He has previously held engineering roles at Splunk and Uber.

Varun Krovvidi

Product Marketing Manager

Varun is a product marketer at Resolve AI. As an engineer turned marketer, he is passionate about making complex technology accessible by blending his technical fluency and storytelling. Most recently, he was at Google, bringing the story of multi-agent systems and products like Agent2Agent protocol to market

Hear AI strategies and approaches from engineering leaders at FinServ companies including Affirm, MSCI, and SoFi.

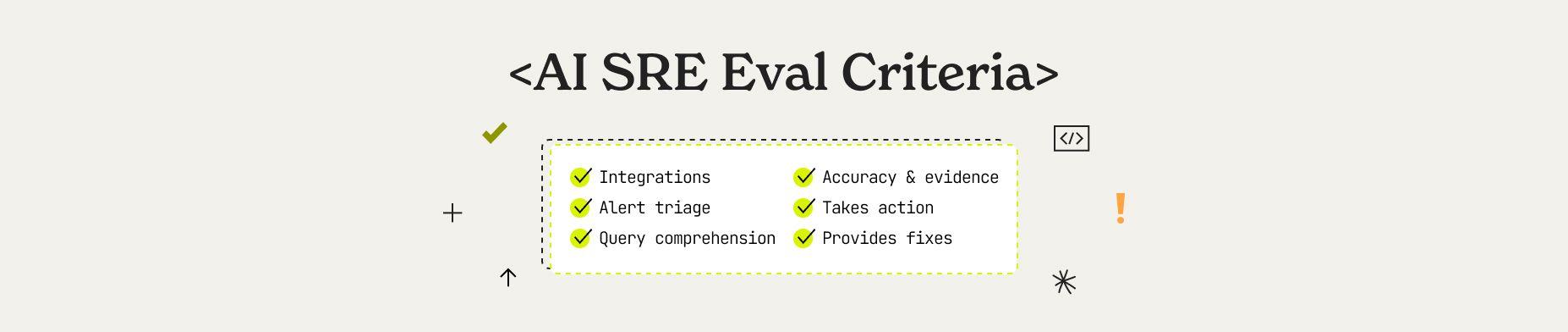

Learn how to evaluate an AI SRE to ensure they run in your unique production environments. This guide explains the five agentic pillars, six key evaluation dimensions, and enterprise readiness criteria that separate production-ready AI SRE from experiments.

100 Engineering software engineering executives joined Resolve AI and other luminary leaders to discuss the accelerated evolution of agentic AI in software engineering from coding to managing production systems.